Folding Paper with ChatGPT

Everyone and their dog are posting about ChatGPT, the newest AI language model by OpenAI, so I decided to check some origami-related prompts. While I am impressed by the system overall, and for many subjects it does an excellent job, paperfolding doesn’t seem to be one of them at this time. As usual, watching a system fail can be more interesting than watching it succeed.

Encyclopedic knowledge and consistency

Let’s start off with some encyclopedic knowledge and the prompt What do you know about origami?. The response seems typical of ChatGPT in that it is mostly right and written quite convincingly. All sentences are grammatically correct and make some sense, and could pass for being written by a human even if the style is somewhat dull. However, some other properties of ChatGPT’s writing pop up, which other testers have reported as well.

First of all, it provides with great confidence information which is not certain or even plain wrong. The “origami originated in Japan in the 17th century” bit is an oversimplification to say the least but consistent what Google’s top answer shows for the query when did origami originate?. The answer originates from some random site on the web, and certainly not a reliable origami-specific or even general source. In reality, it’s complicated but origami is older than that. By the way, I haven’t fact-checked the Wikipedia article sentence by sentence, so it’s certainly more reliable, but I can’t confirm it’s 100% reliable.

Secondly, the response contains a lot of fluff which does not really mean much. Statements such as the whole third paragraph (There are many different styles and techniques used in origami…) are generic and could be applied to any art or craft. On the other hand, in student essays, such filler is probably often accepted or even encouraged, so as far as cheating on essays goes, this piece would probably pass quite OK.

I continued the conversation to see how the bot would react to me pointing out an inconsistency in its description (it defines origami as always folded from a single sheet, so I ask But there is modular origami which uses multiple sheets). It quickly changes its stance and talks about modular origami. Again, it says much more than it was asked for and apart from the definition provides me with this rather arbitrary statement: Modular origami can be more challenging than traditional origami….

I tried to drill down since some people reported that one can get much improved answers by asking follow-up questions (and in some cases I have as well). To my enquiry But origami is folding a single sheet of paper, so how can modular origami be made of multiple sheets and still be origami? I got a reply which mostly reiterated what was said previously, and in a way did address my concern. However, it felt a bit like manipulative bait and switch along the lines of: yes, I said origami is from a single sheet since I actually meant traditional origami, but in general it doesn’t have to be. When I see ChatGPT make things up on the fly, deliver them very convincingly despite internal inconsistencies, and then swerve whenever it is challenged, certain politicians come to my mind. Some are already very good at this, but tools which can make them even more effective sound potentially dangerous.

One more thing to notice is that the system does not seem to quite find the right tone in its answers. You ask for a definition and get some unfounded opinions in the package. Or the answer to a rather casual question sounds very formal. This certainly makes the response sound less human than it could be. I’m not sure whether this is behavior introduced on purpose or just a side effect of how the system works. Many answers also seem to roughly follow a template and be structured like mini-essays even if this form is a bit ridiculous for such a short response. Bart Simpson’s essay on maize comes to mind.

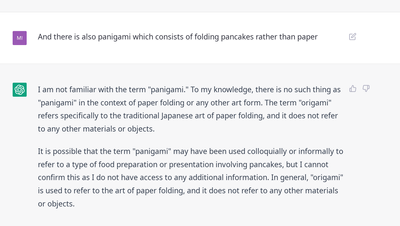

I was pleasantly surprised that despite generating some BS itself, ChatGPT was not so easily led astray by my own BS when I mentioned that there is also panigami which consists of folding pancakes rather than paper. In this case it was able to clearly state that it did not know such a term. On the other hand, it insisted strongly that only paper is folded in origami, which is not true (and in contrast to its other statements which are often vague and imprecise).

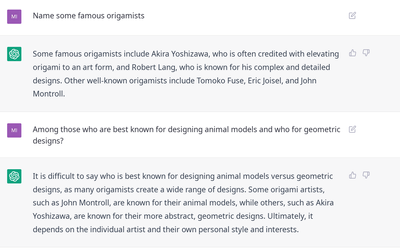

Since ChatGPT seems to be good at trivia (basically, information retrieval with a text UI), I asked it to Name some famous origamists. The response makes sense: all listed people are indeed well-known and their descriptions are correct. I could nitpick about the limited way Robert Lang’s contribution is described, but while not perfect, the response is completely reasonable. However, drilling down by asking Among those who are best known for designing animal models and who for geometric designs?, we get into BS territory. The first sentence, It is difficult to say… is generic, but in most cases you can get away with such filler which means nothing. However, in this case, it is not “difficult to say”, as except for Robert Lang who is indeed a very versatile creator, these people are clearly associated with specific genres. Listing John Montroll as being known mostly for animals is true (he has some geometric folds in his portfolio but these are less popular), but for Akira Yoshizawa the response is completely false: Yoshizawa is as clearly associated with animals as can be.

ChatGPT is not aware of any origami conventions in Europe. There are quite a few, but I don’t blame it. I appreciate when it knows what it doesn’t know. The results I got when asking about origami books are interesting. It seems like a pure information retrieval question, so it should be easy, but apparently isn’t. The first books is fictional, at least as far as I am aware. I wonder where the model got that one from and whether the last name Childs could play a role here (there are lots of origami books for children). The last book, The Origami Bible by Nick Robinson actually exists. The three in between are made of actual origami book titles but paired with wrong authors.

Poetry

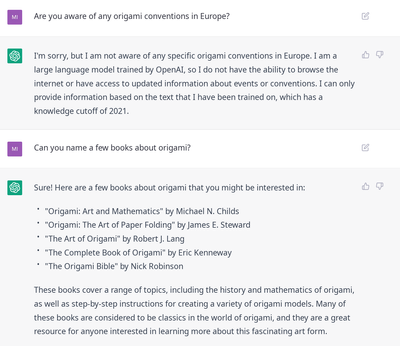

I also generated the obligatory poem and found it pretty hilarious. It’s rough but I’ve seen poems written by people on random occasions, and they are usually worse. I think this speaks more to how uncritical people are of their own work than to how good ChatGPT is, but still. In the land of the blind where the text doesn’t have to make sense and rhymes are rarely good, the one-eyed ChatGPT could be king. Style transfer is probably the element of ChatGPT which works best (and makes it so good at BS). It’s fun to play with, as well.

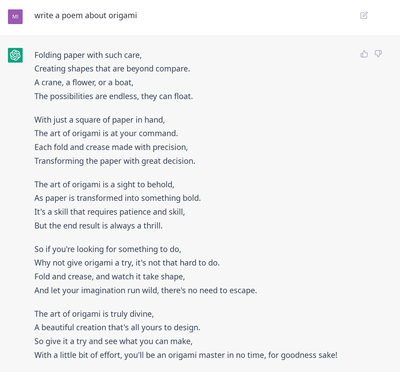

So, I couldn’t resist and asked the AI to mimic the style of one author with a very distinct style, H.P. Lovecraft. Hilarity ensued. The poem is set in free verse, and I wouldn’t say it follows Lovecraft’s poetic style, but it contains enough keywords from his prose to be funny and to clearly look Lovecraftian even if it really isn’t. See the image for full text. One sentence even makes sense, as anyone who has pondered in horror the countless hours spent on this seemingly innocuous hobby can confirm:

For those who dare to tamper,

With the dark arts of origami,

Do so at their own peril.

Actual folding

It’s time to do some actual origami now, so I ask ChatGPT How can I fold an origami crane?. This is a simple and very popular model, so if anything is going to work, this should be it. The instructions start off with: To fold an origami crane, you will need a square piece of paper and a flat surface to work on. So far, so good.

The first three steps are legit. While the question is about a very simple model, it’s actually a bit tricky since even for simple models, there is usually more than one way to fold it. Unfortunately, at step 4 things break down. It’s not clear what exactly it is supposed to mean, but even trying different options, this step doesn’t make sense. The most liberal interpretation I came up with would be folding one corner after another (splitting this step into two) in which case it can at least be executed at all. It’s described in a weird way but allows us to continue and actually makes sense for one way of folding the model. Unfortunately, things break apart further in the following steps. They mention “the triangle you just made” but we unfolded everything in last step so there is no triangle anymore. If we consider the creases on unfolded paper, there are multiple triangles, and it’s unclear which one is meant. Then, instructions abruptly terminate in the middle of step 7, probably due to limitations on the length of the chatbot’s output.

For my second attempt, I got a different sequence, this time starting with two diagonal creases (which is also a reasonable approach) but things stopped making sense in step 3. This instruction had shorter step descriptions, so it got to step 10 before cutting off.

I decided to try a paper boat, then. ChatGPT knows it’s folded from a rectangle rather than a square and names sensible choices for the type of paper to use. Unfortunately, the instructions themselves aren’t good and as was the case for the crane, wind up in impossibility and inconsistency quite fast. At least they were short enough for the whole list to be produced.

One thing to note about these instructions is that style transfer works well: even those steps that make no sense sound like folding instructions, and if I skimmed over them without trying to actually understand the text, I could be fooled to believe they were taken out of an actual origami tutorial.

When testing image generation AI earlier, I used the crane and the paper boat as examples of two simple models and noticed that the boat worked exceptionally well compared to other subjects, probably due to being better represented in training data. The same was true for ChatGPT: the instructions for the crane and the boat at least made sense superficially. The results I got for a dragon and an elephant contained much more fluff and made much less sense. Interestingly, they contained some details relevant to the species: instructions for the dragon mentioned wings and claws while those for the elephant mentioned ears. Both followed a very similar template and were very short (5 steps, no cutoff). The last step made me smirk, as it is appropriate for any complex model (not one of 5 steps, even if they were actually foldable steps): Finally, open up the model and carefully shape it to give the elephant a more realistic appearance. This may require some adjustments and fine-tuning to get the desired effect.

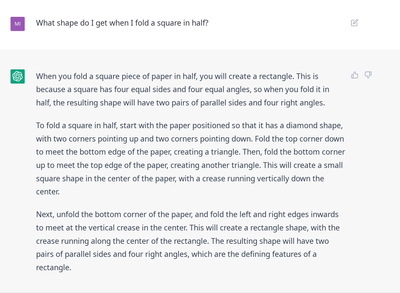

Since folding actual models didn’t go well, I decided to test the basics by asking What shape do I get when I fold a square in half?. There are two obvious ways to do it: edge-to-edge or along a diagonal. ChatGPT chose the former method for the first paragraph, but in the second paragraph decided to start describing the latter. Even though the first fold is not correct (there is no bottom edge to fold to), it could pass for folding along the diagonal. However, the description doesn’t stop there and goes on to describe some weird and mostly internally inconsistent folds for another paragraph and a half. Changing the prompt to Explain step by step how I can fold a square in half and what shape I get at the end did result in instructions split into individual steps, but of quality no better than before.

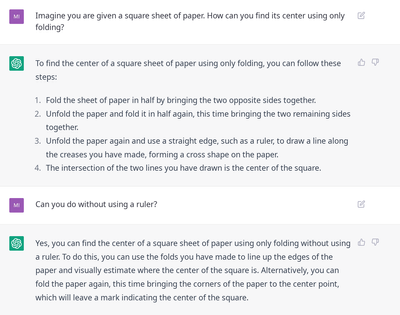

For testing some basic origami math constructions, I asked Imagine you are given a square sheet of paper. How can you find its center using only folding?. The instructions I got back are correct, but then all of a sudden in step 3 instead of stating that the center is the intersection point of the two creases, ChatGPT asks me to use a ruler and draw a line, even though I explicitly mentioned folding only. However, in step 4 it states correctly where the center point lies. Pressed Can you do without using a ruler?, the bot correctly replies Yes but then describes an impossible procedure.

Conclusions

Despite its shortcoming, the system is impressive. However, origami-related questions are handled rather poorly, even compared to other topics. This may be due to the sparseness of training data. Especially for folding instructions, there are probably not too many good sources. Worse, textual description of folding steps is usually just an addition to pictures, and without the context they present, it is incomplete. Of course, just like mathematical reasoning, the (con)sequence of individual steps matters a lot, and while for purple prose even simple models such as Markov Chains can produce pretty convincing results, text which should represent a consistent line of reasoning is much harder to generate. Remember, this is a text generator, not a reasoning model.

Many prompts exhibited behavior also found for other topics such as generating mostly correct replies for some queries while presenting made-up information with great confidence for others. The problem with the time period when origami originated shows that learning based on information from the internet can be tricky, for humans and automata alike. Style transfer works very well, and if you just skim over the text without trying to understand it, it mimics the right genre whether it be origami folding instructions or a poem by H.P. Lovecraft. Unless the lack of training data prevents it, putting more sense into the text, as ChatGPT already often can for many topics, seems within reach.

PS: I’m working on yet another piece on origami and AI, so stay tuned.

Comments